To fight the spread of child sexual abuse material (CSAM), a coalition of top generative AI developers—including Google, Meta, and OpenAI—vowed to enforce guardrails around the emerging technology.

The group was pulled together by two non-profit organizations: children's tech group Thorn and New York-based All Tech is Human. Formerly known as the DNA Foundation, Thorn was launched in 2012 by actors Demi Moore and Ashton Kutcher.

The collective pledge was announced Tuesday along with a new Thorn report advocating a “Safety by Design” principle in generative AI development that would prevent the creation of child sexual abuse material (CSAM) across the entire lifecycle of an AI model.

“We urge all companies developing, deploying, maintaining, and using generative AI technologies and products to commit to adopting these Safety by Design principles and demonstrate their dedication to preventing the creation and spread of CSAM, AIG-CSAM, and other acts of child sexual abuse and exploitation,” Thorn said in a statement.

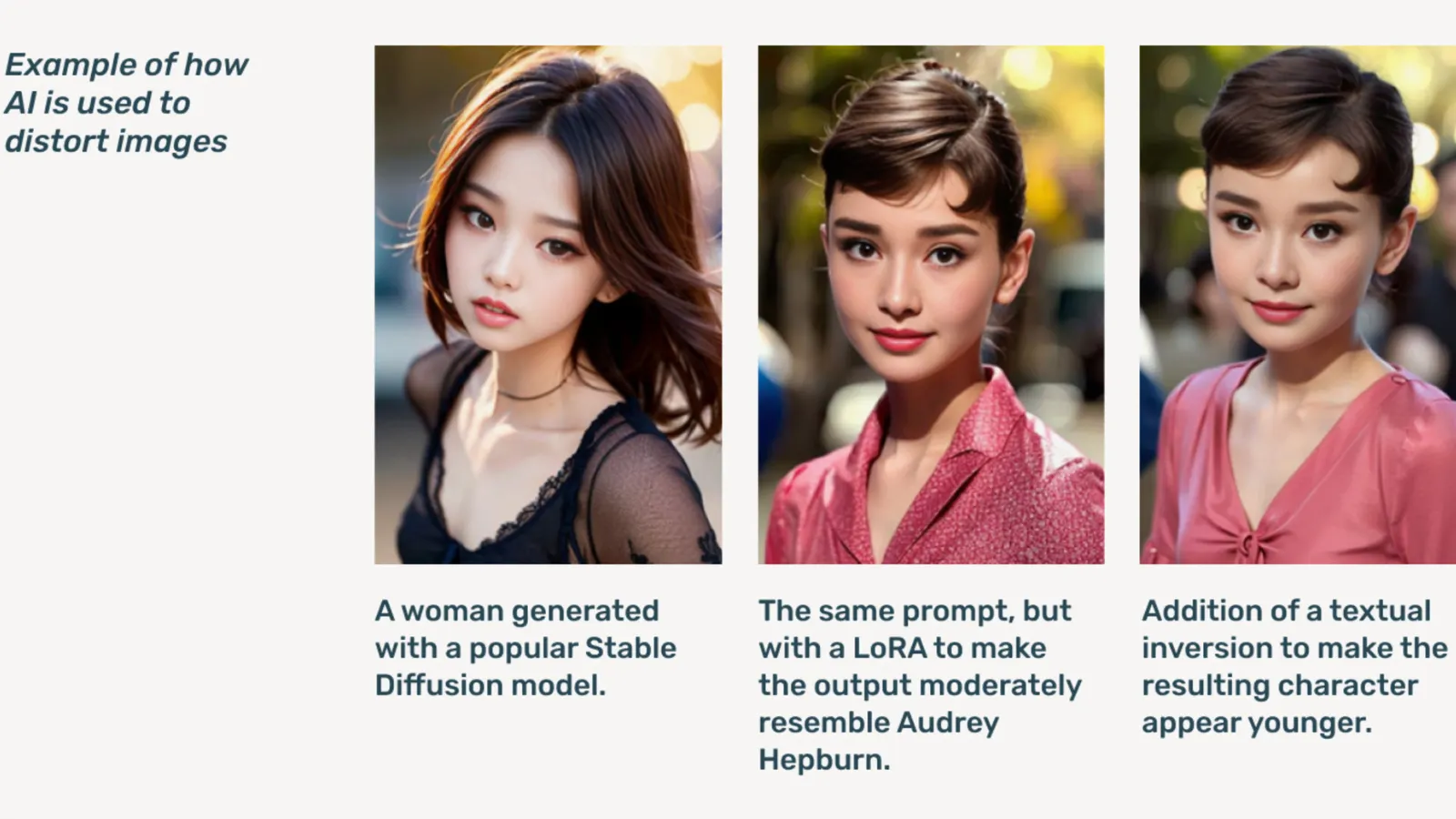

AIG-CSAM is AI-generated CSAM, which the report illustrates can be relatively easy to create.

Thorn develops tools and resources focused on defending children from sexual abuse and exploitation. In its 2022 impact report, the organization said over 824,466 files containing child abuse material were found. Last year, Thorn reported more than 104 million files of suspected CSAM were reported in the U.S. alone.

Already a problem online, deepfake child pornography skyrocketed after generative AI models became publicly available, with stand-alone AI models that don’t need cloud services being circulated on dark web forums.

Generative AI, Thorn said, makes creating volumes of content easier now than ever before. A single child predator could potentially create massive volumes of child sexual abuse material (CSAM), including adapting original images and videos into new content.

“An influx of AIG-CSAM poses significant risks to an already taxed child safety ecosystem, exacerbating the challenges faced by law enforcement in identifying and rescuing existing victims of abuse, and scaling new victimization of more children,” Thorn notes.

Thorn’s report outlines a series of principles the generative AI developers would follow to prevent their technology from being used to create child pornography, including responsibly sourcing training datasets, incorporating feedback loops and stress-testing strategies, employing content history or “provenance” with adversarial misuse in mind, and responsibly hosting their respective AI models.

Others signing onto the pledge include Microsoft, Anthropic, Mistral AI, Amazon, Stability AI, Civit AI, and Metaphysic, each releasing separate statements today.

“Part of our ethos at Metaphysic is responsible development in an AI world, right, it's about empowering, but it's about responsibility,” Metaphysic chief marketing officer Alejandro Lopez told Decrypt. “We quickly recognize that to start and develop that means literally safeguarding the most vulnerable in our society, which are children, and unfortunately, the darkest end of this technology, which is being used for child sexual abuse material in the forms of deep fake pornography, that has happened.”

Launched in 2021, Metaphysic came to prominence last year after it was revealed several Hollywood stars, including Tom Hanks, Octavia Spencer, and Anne Hathaway, were using Metaphysic Pro technology to digitize characteristics of their likeness in a bid to retain ownership over the traits necessary to train an AI model.

OpenAI declined to comment further on the initiative, instead providing Decrypt a public statement from its child safety lead Chelsea Carlson.

“We care deeply about the safety and responsible use of our tools, which is why we’ve built strong guardrails and safety measures into ChatGPT and DALL-E,” Carlson said in a statement. “We are committed to working alongside Thorn, All Tech is Human and the broader tech community to uphold the Safety by Design principles and continue our work in mitigating potential harms to children.”

Decrypt reached out to other members of the Coalition but did not immediately hear back.

“At Meta, we’ve spent over a decade working to keep people safe online. In that time, we’ve developed numerous tools and features to help prevent and combat potential harm—and as predators have adapted to try and evade our protections, we’ve continued to adapt too,” Meta said in a prepared statement.

“Across our products, we proactively detect and remove CSAE material through a combination of hash-matching technology, artificial intelligence classifiers, and human reviews,” Google's vice president of trust and safety solutions Susan Jasper wrote in a post. “Our policies and protections are designed to detect all kinds of CSAE, including AI-generated CSAM. When we identify exploitative content we remove it and take the appropriate action, which may include reporting it to NCMEC.”

In October, the UK watchdog group, the Internet Watch Foundation, warned that AI-generated child abuse material could ‘overwhelm’ the internet.

Edited by Ryan Ozawa.