Qualcomm, a titan in the realm of high-end smartph0ne processors, is gearing up to unveil the third generation of its Snapdragon 8 chip. This announcement is particularly noteworthy given that its current top-of-the-line predecessor, the Snapdragon 8 Gen 2, currently holds the title of the second most powerful smartphone chip ever, according to Antutu benchmarks, bested only by the Dimensity 9200+ developed by Taiwanese manufacturer MediaTek.

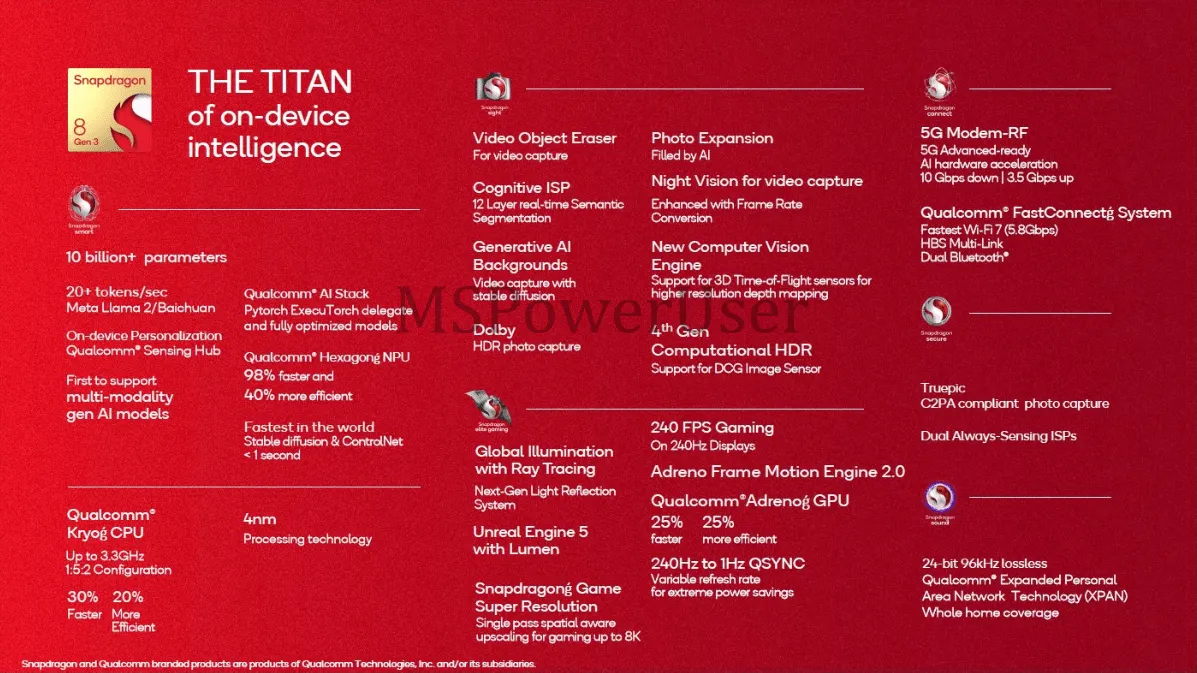

Expected to be unveiled officially on Tuesday, details of the new chip leaked online on Monday. The new chip promises not just enhanced performance but a significant leap in on-device AI capabilities, according to MS Poweruser.

In addition to the expected incremental performance improvements—like a 25% faster GPU and a 30% faster CPU—the new system-on-a-chip (SoC) has a powerful AI engine capable of supporting high precision in local AI models, and can run local large-language models (LLMs) of over 10 billion parameters. The chip can even run the text-to-image model Stable Diffusion with the ControlNet neural network.

Mobile hardware manufacturers are pivoting robustly towards AI. Google, for instance, has revamped its Tensor chips, equipping them to manage the myriad of AI features that the latest Pixel phones execute locally, bypassing the need for cloud processing. Similarly, Apple has upped its game with the A17 Pro SoC, enhancing its AI prowess.

The advent of AI-capable chips in consumer devices heralds a transformative era in technology. These advanced processors, designed to run intricate AI models locally, hint at a future brimming with possibilities, from personalized chatbot assistants and hyper-realistic gaming experiences to real-time health monitoring and adaptive user interfaces.

The promise of faster processing, coupled with better personalization and more privacy, positions these chips as the cornerstone of a future where our devices aren't just smart, but intuitively attuned to our needs and preferences.

Kent Keirsey is the CEO of Invoke AI, a company at the forefront of AI image generation and harnessing the power of Stable Diffusion. He told Decrypt that running AI on a device rather than in the cloud comes with a lot of advantages.

"Cloud-based compute is a great option for larger enterprises, however, for individuals looking to create content, on-device AI offers a more affordable, personalized, and private alternative," he said. He also emphasized the potential of Apple's approach in deploying a larger set of unified memory, suggesting a future with a potent local LLM assistant instead of a cloud-based Siri, for example.

However, as powerful as this new chip is, Invoke has no plans to port its app to Android or iOS devices, Keirsey said.

"Our commercial solution is typically used for more intricate workflow processes," he said. "While we don't have immediate plans for a local, OSS Android app, we're keenly observing the trajectory of usage patterns for creatives and the emergence of cutting-edge technology that supports mobile deployment."

Right now, the go-to option for local image generation is the Draw Things app on iOS. There is no comparable option for Android users.

Keirsey was optimistic about the ripple effects of Qualcomm's new chip in the broader tech ecosystem.

"Offering this chip for on-device AI improves the capabilities that can be deployed on mobile devices,” he explained, adding that he expects to see app developers incorporating on-demand AI capabilities into their services as hardware gets more capable.

Yet, every innovation has its challenges. Keirsey pointed to potential issues with distributing model weights to end devices, especially for proprietary models like GPT-3 or GPT-4, which are huge and not open source.

"This can be non-viable for a proprietary model where managing intellectual property is paramount," he said. However, Keirsey was quick to spotlight the potential of open-source models like Stable Diffusion or Mistral7B as potential game-changers.

As reported by Decrypt, Mistral7B is a lightweight model, trained on 7 billion parameters, which has been making waves in the AI community. Decrypt tested its replies against other more powerful competitors like LlaMA and Stable Beluga 2, and it beat their outputs. For context, while Mistral 7B operates on 7 billion parameters, GPT-4 boasts a staggering 1.7 trillion parameters.

Qualcomm's Snapdragon 8 Gen 3 is more than a microchip; it's a harbinger of an AI-centric mobile future. With industry leaders like Qualcomm, Google, and Apple leading the way, it’s just a matter of time before your assistant can keep all your secrets and yet be actually useful in answering questions and performing tasks.

Edited by Ryan Ozawa.