Despite the warning shot heard around the world—an open letter signed by hundreds of industry leaders declaring artificial intelligence an existential threat to humanity—a new survey of over 2,700 AI experts paints a less dire picture of the future.

The comprehensive AI Impacts survey, conducted with the help of researchers from the Universities of Oxford, Berkeley and Bonn, asked AI researchers about the pace of progress in the field and the potential impact of advanced AI systems. While a wide range of perspectives were compiled, only around 5% felt there was a high chance of human extinction from AI.

This contrasts sharply with the sentiments of the general public.

An August 2023 poll found that 61% of Americans see AI as a threat to humanity. The concern was echoed by industry leaders who signed a Center for AI Safety letter saying that "mitigating the risk of extinction from AI should be a global priority."

Paul Christiano, former chief of alignment at OpenAI, estimated a 50% chance of AI ending in “catastrophe.” Many have declared AI a threat on par with nuclear war or a global pandemic.

Despite these widely covered pessimistic predictions, AI continues to evolve. Companies are racing to develop more advanced systems, with OpenAI's CEO Sam Altman advocating for regulations alongside a push to test the boundaries of the technology.

On AI capabilities, many survey respondents predicted that AI would achieve major creative milestones within 10 years: coding an entire payment website from scratch, for example, or creating songs indistinguishable from today's hits. But timelines stretched longer for challenges like mathematical theorem proving.

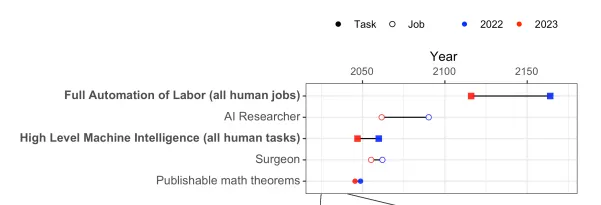

More specifically, the possibility of High-Level Machine Intelligence (HLMI) becoming a reality has been pulled 13 years closer, with a 50% probability of success predicted by 2047. Similarly, the Full Automation of Labor (FAOL) horizon—one of Elon Musk’s controversial predictions—has shifted 48 years earlier to 2116.

When asked about the ability of AI to match all human abilities, researchers forecast a 50% chance by 2047. That's 13 years sooner than the one from a similar survey in 2022.

Respondents were split on whether faster or slower progress would benefit humanity more.

Interestingly, while the AI systems of 2043 are expected to be abie to find unexpected ways to achieve goals, there is less confidence among researchers in the human ability to explain such actions. This ambiguity in how AI decision-making works highlights the need for prioritizing AI safety research—a sentiment echoed by 70% of respondents.

Views differed on whether AI in 2028 could be trusted to explain its own reasoning.

Substantial concern also exists around risks like misinformation, inequality, and mass surveillance enabled by AI.

While researchers are cautiously optimistic overall, around 40% think there is a minimum (not zero) chance of human extinction from advanced AI. So while killer robots aren't imminent, studying AI ethics and safety remains critical.

Edited by Ryan Ozawa.