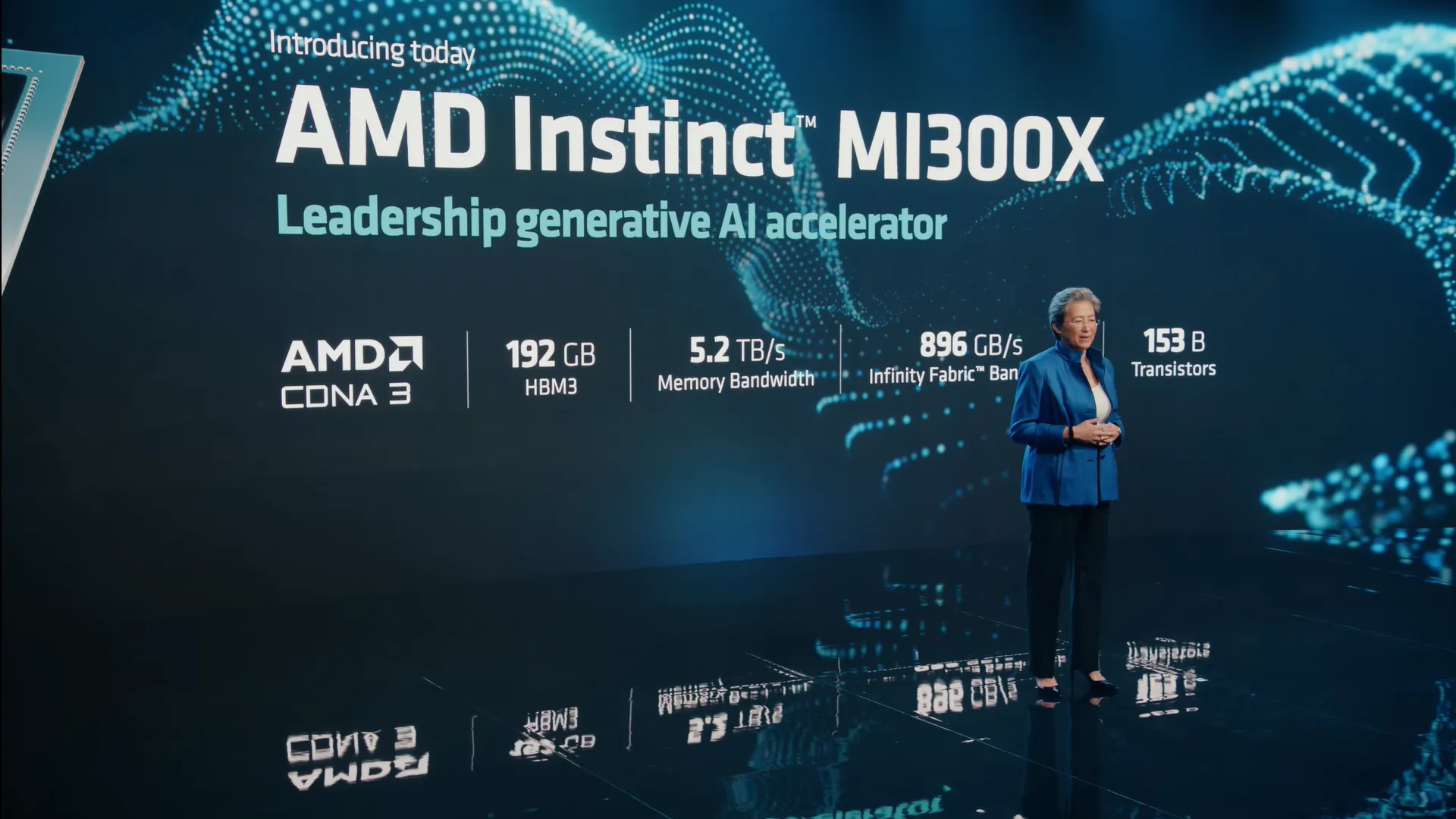

AMD unveiled its new MI300X AI Accelerator chip, a direct challenge to NVIDIA's reigning H100, in a move to leverage its success in the industry frenzy over AI. The tech giant's latest addition to its AI chip arsenal aims to disrupt NVIDIA's dominance in the AI accelerator market.

Tailored explicitly for AI tasks, the MI300X is packed with up to 192GB of memory, making it an ideal choice for executing large models like LLMs. AMD's choice of a high memory configuration is a strategic one, enabling easy deployment of expansive models. AI training requires massive computational power, but overall, it needs a lot of vRAM to store information during training sessions —this is why some gaming GPUs may be good to mine crypto but are not really great for AI tasks.

Furthermore, the underlying architecture of the chip has been engineered to support generative AI workloads smoothly. AMD CEO Lisa Su points out that the latest generation of leading-edge models can easily find a home in the MI300X’s 192GB of HBM3 (high-bandwidth memory).

“With all of that additional memory capacity, we actually have an advantage for large language models because we can run larger models directly in memory.” Lisa Su elaborated, explaining that users will need less GPUs to accomplish the same tasks in less time.

This product launch comes at a time when NVIDIA’s market capitalisation has hit the $1 trillion mark. While AMD's market cap doesn't quite compare —it’s just over $207 billion— the release of the MI300X underscores AMD's determination to make its mark in the expanding AI landscape.

To further demonstrate its commitment, AMD successfully ran the Falcon 40B LLM on the MI300X during the chip's reveal. This achievement, as per Su, marks the “first time an LLM of this size can be run entirely in memory”.

AMD: A Powerful Contender to Nvidia’s Domination of the AI Space

In a simultaneous revelation, AMD released an update to RocM, a software stack for graphics processing unit (GPU) programming which is a robust contender against NVIDIA's CUDA language. The significant memory bandwidth available with the MI300X could persuade companies to purchase fewer GPUs, presenting AMD as a compelling value proposition, particularly for smaller firms with light to medium AI workloads.

AMD has greatly profited from the AI hype, with its stocks experiencing a significant surge this year. The company’s rise has not only drawn the attention of investors but also generated positive chatter in the industry.

Can AMD become the next NVIDIA? It's a tough call, given the vast disparity in their market capitalization. However, one thing is certain: AMD's MI300X AI Accelerator is a force to be reckoned with.

In this AI-driven arena, it's not just about the size of the player, but also the strength of their game. As AMD steps up to the plate, the world will be watching to see if this underdog can hit a home run.