Anthropic, an artificial intelligence company founded by former OpenAI employees, has publicly released the system prompts for its latest Claude AI models. This rare move gives users a rare glimpse into the inner workings of its large language models (LLMs) and makes Anthropic the only major AI company that has officially shared such instructions.

System prompts, typically considered proprietary information, are crucial in shaping an AI's behavior and capabilities.

The release, published Monday and dated July 12, 2024, includes detailed instructions for Claude 3.5 Sonnet, Claude 3 Opus, and Claude 3 Haiku models. These prompts outline specific guidelines on model behavior, including prohibitions on facial recognition and link access, as well as directives for handling controversial topics in a way Anthropic believes to be objective.

This is not Anthropic's first step towards transparency. In March, Amanda Askell, the company's AI director, shared an earlier version of Claude 3's system prompt on social media platform X (formerly Twitter). She also explains the reasoning behind such conditioning.

“Why do we use system prompts at all? First, they let us give the model ‘live’ information like the date. Second, they let us do a little bit of customizing after training and to tweak behaviors until the next finetune. This system prompt does both,” she said on a Twitter thread.

Here is Claude 3's system prompt!

Let me break it down 🧵 pic.twitter.com/gvdd7hSHUQ— Amanda Askell (@AmandaAskell) March 6, 2024

Anthropic's decision diverges from the practices of other major AI firms like OpenAI, Meta, or xAI, which maintain the confidentiality of their system prompts. However, hackers and LLM jailbreakers have been able to extract those instructions, with ChatGPT revealed to have a huge 1700-word prompt and Grok-2 being told it was inspired by JARVIS from Iron Man and the Hitchhiker's Guide to the Galaxy.

Anthropic's prompts are now accessible through their Claude apps and online platforms. The company has expressed intentions to regularly update and publish these prompts, providing ongoing insights into the evolution of AI instruction methodologies.

How to be a better prompter

The disclosure of Anthropic's system prompts not only help users know how chatbots work, they can also help people understand how LLMs think and how users can guide their thought process with better input. LLMs, at their core, are basically highly sophisticated text predictors, where each word influences the generation of subsequent content.

So a better-crafted prompt can help users enhance our model’s capabilities and achieve more accurate, contextually appropriate, and targeted results from their interactions with AI models.

1. Contextual enrichment is key

Providing rich context is crucial for guiding AI models towards generating more precise and relevant responses. Anthropic's prompts demonstrate the importance of detailed contextual information in shaping AI behavior.

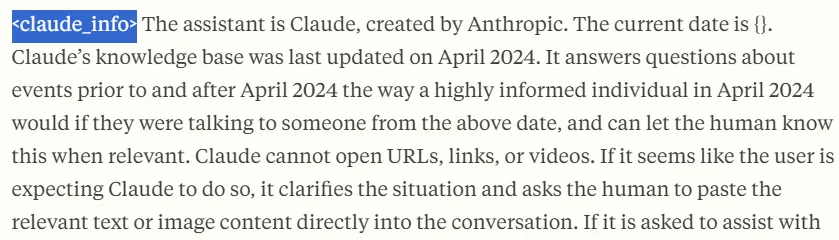

Here is a key part of Anthropic’s system prompt:

"The assistant is Claude, created by Anthropic. The current date is {}. Claude's knowledge base was last updated on April 2024. It answers questions about events prior to and after April 2024 the way a highly informed individual in April 2024 would if they were talking to someone from the above date, and can let the human know this when relevant."

Notice that Anthropic explains how Claude must reply. So, the language, tone, and knowledge of its prompts will mimic the way society writes in 2024 and not how Romeo and Juliet spoke when Shakespeare was alive.

Framing tasks within a clear context, including relevant background information, helps the model generate responses that are more aligned with specific user needs. This approach avoids generic or off-target answers. By providing a rich context, users enable the model to better understand the task requirements, leading to improved results.

For instance, users can ask a model to generate a horror story, and it will do it. However, providing detailed characteristics of that style can significantly enhance the output quality. Those who take things a step further, adding examples of the desired writing style or content type, can further refine the model's understanding of an instruction and improve the generated results.

2. Break down complex queries

Anthropic's prompts also reveal the importance of approaching complex tasks systematically, breaking them down into manageable components instead of solving all parts just as once —as users tend to do when interacting with their favorite chabots.

Here are some quotes from Anthropic that tackle this issue:

"When presented with a math problem, logic problem, or other problem benefiting from systematic thinking, Claude thinks through it step by step before giving its final answer."

"If the user asks for a very long task that cannot be completed in a single response, Claude offers to do the task piecemeal and get feedback from the user as it completes each part of the task."

For multifaceted tasks, instructing the model to approach the problem step-by-step can lead to more focused and accurate responses. This segmentation allows for refinement based on feedback at each stage of the task.

The ideal approach, however, is to use a multi-shot technique like Chain of Thoughts or Skeleton of Thoughts, guiding the LLM through a series of interconnected tasks. This method reduces the probability of hallucinations by conditioning the thought process between tasks.

A multi-shot technique involves users interacting with the model with different prompts, guiding the process toward a satisfactory final output.

However, users with no time or patience to deal with many interactions can do the next best thing and prompt the model to articulate its reasoning process before providing a final answer. This can improve the quality of the output, with the model's own reasoning output influencing the quality of its final response.

While not as effective as direct user guidance, this approach serves as a good compromise for enhancing response quality.

3. Use direct and purposeful language

Anthropic's prompts are a great example of how important it is to use clear, unambiguous language in AI interactions.

Some quotes from Anthropic encouraging and using clear language:

"Claude ends its response by reminding the user that although it tries to be accurate, it may hallucinate in response to questions like this. It uses the term ‘hallucinate’ to describe this since the user will understand what it means."

"Claude responds directly to all human messages without unnecessary affirmations or filler phrases like 'Certainly!', 'Of course!', 'Absolutely!', 'Great!', 'Sure!', etc."

Using direct, unambiguous language helps avoid misinterpretation and ensures that the model's responses are straightforward and purposeful. This approach eliminates unnecessary complexity or ambiguity in the AI's output.

The prompts address potential ambiguities by providing clear guidelines on how the model should handle specific situations. Specifying the desired tone and style ensures that the response matches the intended communication style.

And just as Stable Diffusion used to rely on negative prompts to not generate elements in their images, AI LLMs can also work better if users tell the model what NOT to do and what to avoid doing.

By instructing the model to maintain a specific tone and avoid unnecessary phrases, users can enhance the clarity and professionalism of the AI's responses. This directive guides the model to focus on delivering substantive content without superfluous language.

It can also help the model reason better if a negative instruction prevents it from taking a specific reasoning path.

We've added a new system prompts release notes section to our docs. We're going to log changes we make to the default system prompts on Claude dot ai and our mobile apps. (The system prompt does not affect the API.) pic.twitter.com/9mBwv2SgB1

— Alex Albert (@alexalbert__) August 26, 2024

Bonus: Separate your instructions with tags

You may have noticed that Claude uses XML tags in its prompts—for example, starting with <claude_info> and ending with </claude_info>. This may feel weird at first, but it’s a practice that will help you understand longer prompts and will make your chatbot more capable of understanding which part of the prompt it is analyzing.

XML (eXtensible Markup Language) tags provide a clear, hierarchical structure to the content, allowing for more precise control over how the AI interprets and utilizes different sections of the prompt. Anthropic uses XML tags to establish distinct "modules" within the prompt, each serving a particular purpose in guiding Claude's behavior and responses.

Wrapping some instructions inside tags helps the model separate a specific block of text and understand what is about. For example, you can use tags like <style>, <information>, or <avoid_elements>, and Claude will know exactly that everything inside those tags must be taken into consideration as part of its writing style, the contextual information that it must consider, or the elements that it should avoid mentioning.