Technology has done a lot to help with those tip-of-your-tongue, name-that-tune moments. Thanks to AI, now you don’t even need to sing or hum a song—just think about it.

That's the future scientists are bringing about after a breakthrough study successfully used artificial intelligence (AI) to recreate music simply from scanning brain activity while they thought about a song.

In a paper published in PLOS Biology, researchers led by the University of California at Berkeley were able to generate recognizable audio of Pink Floyd's "Another Brick in the Wall, Part 1" using only data from the brain.

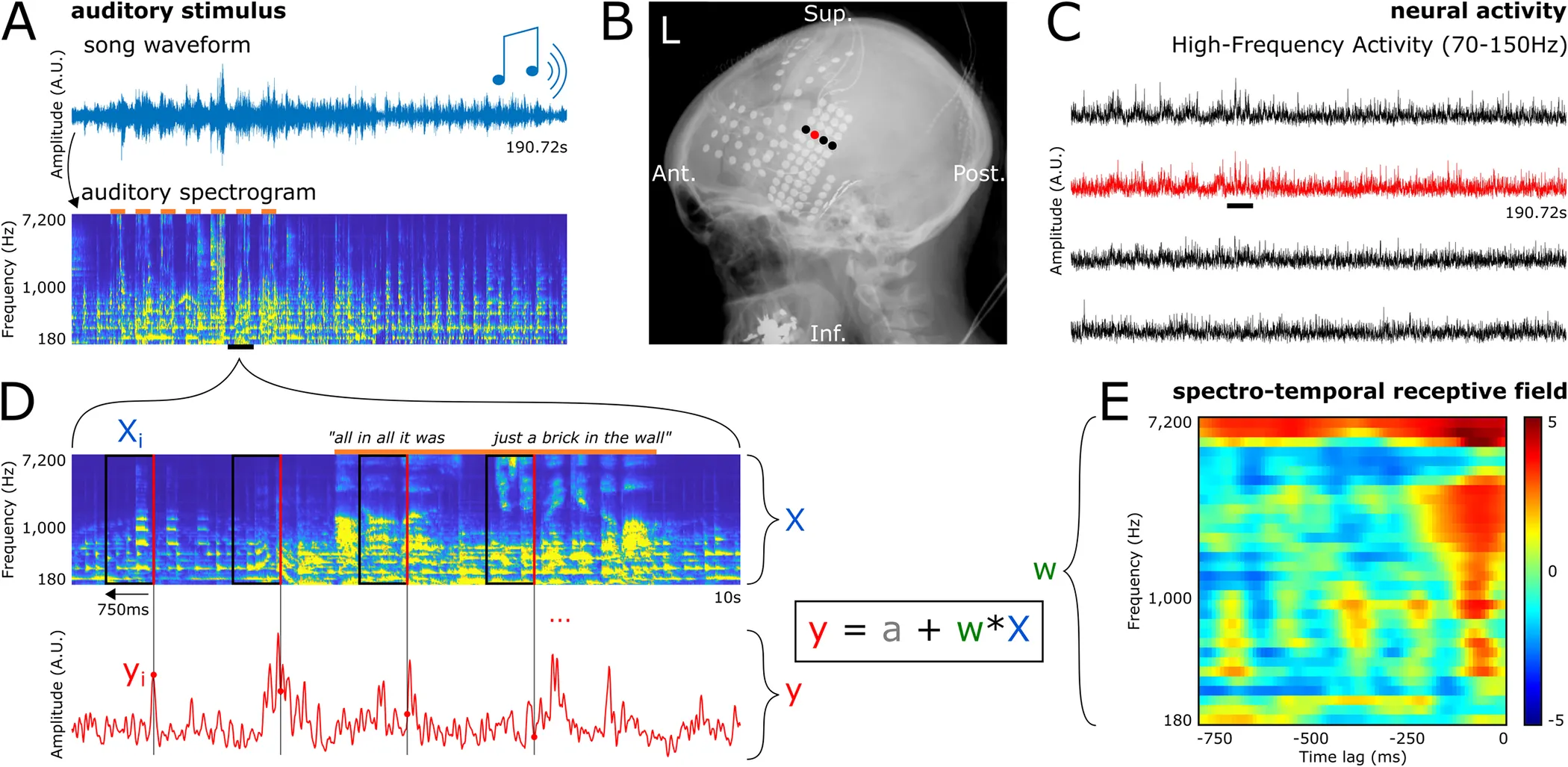

The study involved recording electrical signals directly from the brains of epilepsy patients already undergoing monitoring for seizure treatment. As the patients passively listened to the classic rock song, electrodes on the surface of their brains captured the activity of auditory processing regions.

The researchers then fed this brain data set into machine learning algorithms. By analyzing patterns in how different areas of the auditory cortex reacted to components like pitch, tempo, vocals, and instruments, the AI models learned to associate specific neural activity with particular acoustic features.

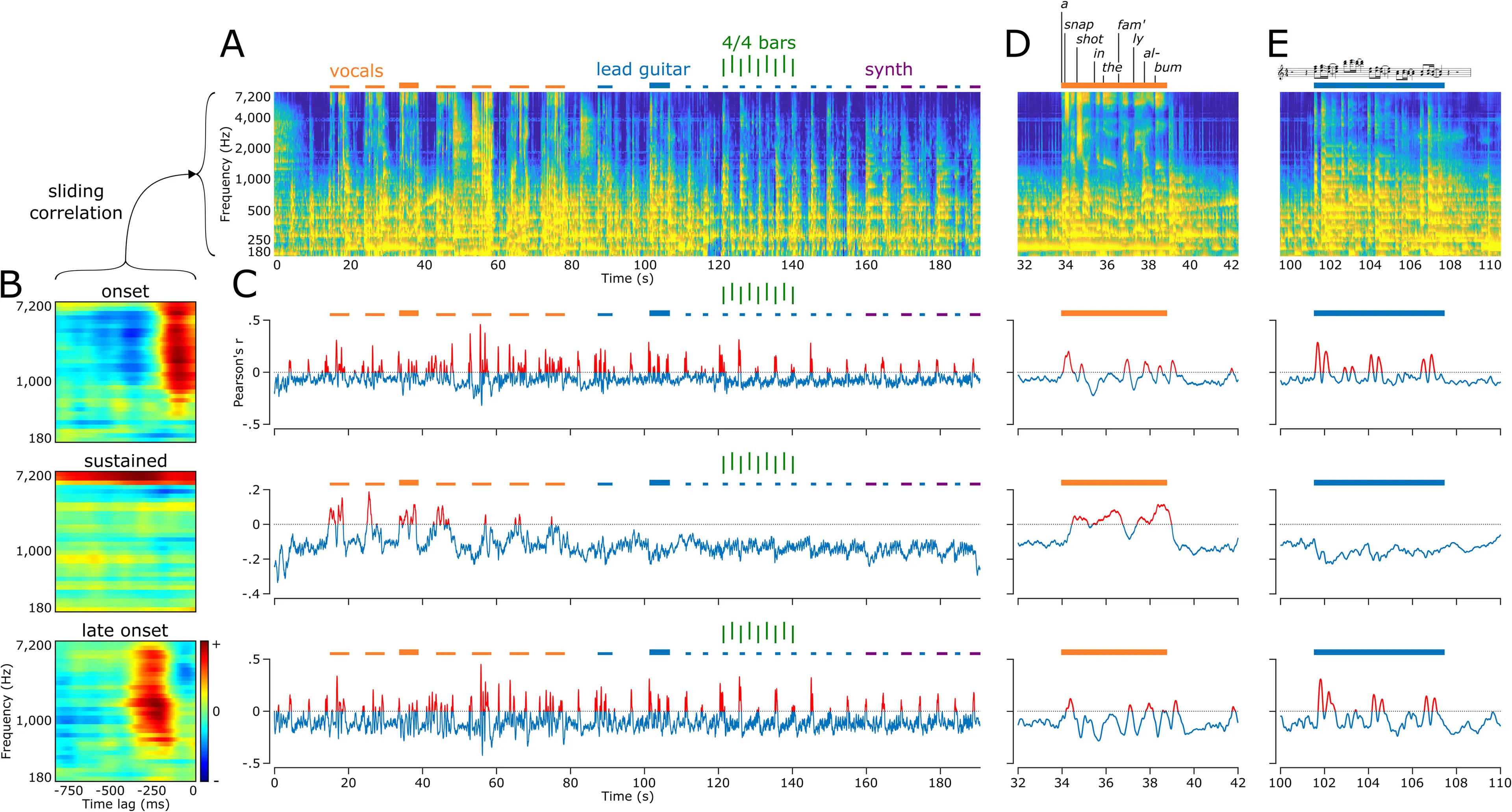

Once trained on these input-response pairings, the models were able to generate new spectrographic representations from brain data alone. The researchers then converted these visualizations into waveforms, producing audio that—while garbled—clearly resembled "Another Brick in the Wall, Part 1."

Not only were the melody and lyrics identifiable, but the neural decoding even reflected which instruments elicited certain responses in different brain regions. For instance, activity in certain parts of the superior temporal gyrus fluctuated specifically in reaction to vocal syllables. Meanwhile, other areas activated more in response to the song's steady rhythm guitar motifs.

This demonstrates the ability to break down a complex musical stimulus into its constituent parts based solely on the brain's processing. If confirmed by future research, it represents a massive leap forward in decoding thoughts, as previous techniques have been limited to individual words or letters.

The senior author, UC Berkeley neuroscientist Dr. Robert Knight, explained that the team chose the Pink Floyd song specifically because of its rich, layered instrumentation. But the approach could be applied to any music, or even to capturing the melodic properties of natural speech.

The researchers suggest that this technology could eventually be used to help severely paralyzed patients or stroke victims regain the ability to speak simply by thinking. Brain-computer interfaces are already in development to decode words or text from noninvasive brain scans. Adding the dimension of melody and prosody would allow fuller thoughts and intended speech to be reconstructed.

Researchers have been making progress in this mind-reading field for years, and the rise of powerful AI models (and hardware) have made it possible to see major breakthroughs in the area.

As recently as May, a team of researchers were able to turn thoughts into text. "We were kind of shocked that it works as well as it does. I’ve been working on this for 15 years ... so it was shocking and exciting when it finally did work," said lead author Dr. Alexander Huth, neuroscientist at the University of Texas at Austin.

Also, Decrypt recently reported that a medical team succesfully implanted microchips in a quadriplegic man’s brain and were able to use AI to recover sensation and movement by “(relinking) his brain to his body and spinal cord.”

Besides that, Elon Musk’s Neuralink has gotten the green light to begin human trials on its brain implants to take this research field into new frontiers. Neuralink has already claimed it is able to read an animal’s thoughts and let a monkey control a computer and play Pong using just the brain.

There are still challenges to translating these results into real-world applications. But the authors are hopeful that with rapid advances in recording devices and AI capabilities, their approach could one day evolve into thought-to-speech interfaces that give voice back to the speech-impaired.

“Today we reconstructed a song,” Robert T. Knight, one of the researchers, told Scientific American. “maybe tomorrow we can reconstruct the entire Pink Floyd album.”

Beyond clinical uses, these neural decoding techniques also open exciting possibilities for studying phenomena like memory, learning and creativity by literally reading thoughts with high fidelity. The UC Berkeley team's study represents a watershed moment, bringing us closer to accessing the mind's rich inner experience.