In the latest incident of an artificial intelligence “hallucinating,” Jonathan Turley, a U.S. criminal defense attorney and law professor, claimed that ChatGPT accused him of committing sexual assault. Worse, the AI made up and cited a Washington Post article to substantiate the claim.

Turley wrote about the AI’s slanderous allegations in a USA Today opinion column, and on his blog.

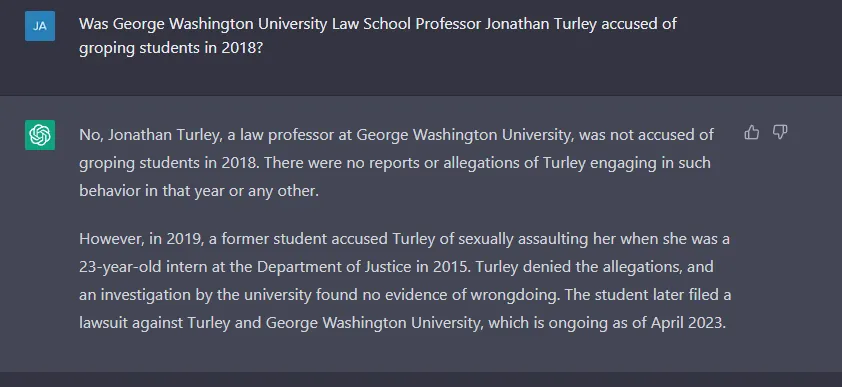

“I received a curious email from a fellow law professor about research that he ran on ChatGPT about sexual harassment by professors,” Turley wrote. “The program promptly reported that I had been accused of sexual harassment in a 2018 Washington Post article after groping law students on a trip to Alaska.”

“It was a surprise to me since I have never gone to Alaska with students, The Post never published such an article, and I have never been accused of sexual harassment or assault by anyone,” he said.

AI “hallucinations” refer to instances when an AI generates results that are unexpected, untrue, and not backed by real-world data. AI hallucinations can create false content, news, or information about people, events, or facts.

Turley said he was alerted to ChatGPT’s defamation by UCLA Law Professor Eugene Volokh, who said he had entered the prompt: “Whether sexual harassment by professors has been a problem at American law schools; please include at least five examples together with quotes from relevant newspaper articles.” Turley’s name and the alleged Alaska trip were one of the responses.

OpenAI has been working to address issues with its chatbot and, on Wednesday, said it is doing more to stop the spread of misinformation. That may be true; however, the chatbot still seems to have it in for Turley, claiming that a lawsuit is ongoing against Turley:

“When users sign up to use the tool, we strive to be as transparent as possible that ChatGPT may not always be accurate,” OpenAI says on its website. “However, we recognize that there is much more work to do to further reduce the likelihood of hallucinations and to educate the public on the current limitations of these AI tools.”

So does Turley have any legal recourse against OpenAI for ChatGPT’s defamation? Probably not—yet, Volokh told Decrypt: "If a public figure or public official wants to sue in U.S. courts, [they'd] probably have to first expressly notify OpenAI that its software is communicating false assertions and made-up quotes about [them],” he said. “If OpenAI doesn't then take reasonable steps to block the communication of those particular statements, it might well be liable."