In a breakthrough that could potentially save marriages, friendships, and countless misunderstandings, scientists say they have built an AI-powered sarcasm detection tool.

A group of researchers from the University of Groningen in the Netherlands claim they have developed an AI system that can tell whether you're being straight and serious, or deploying some sly, humorous wit.

Their work was presented today at a meeting of the Acoustical Society of America in Ottawa, and combines text and voice analysis. The team is led by graduate student Xiyuan Gao, who has been "tackling the challenge of figurative language" as part of her academic research.

"The specific architecture we applied in the model integration part is the attention mechanism. We use it to capture the relationship between text and audio, emotion and sentiment," Gao told Decrypt.

A research paper elaborates more on the team's approach to sarcasm detection.

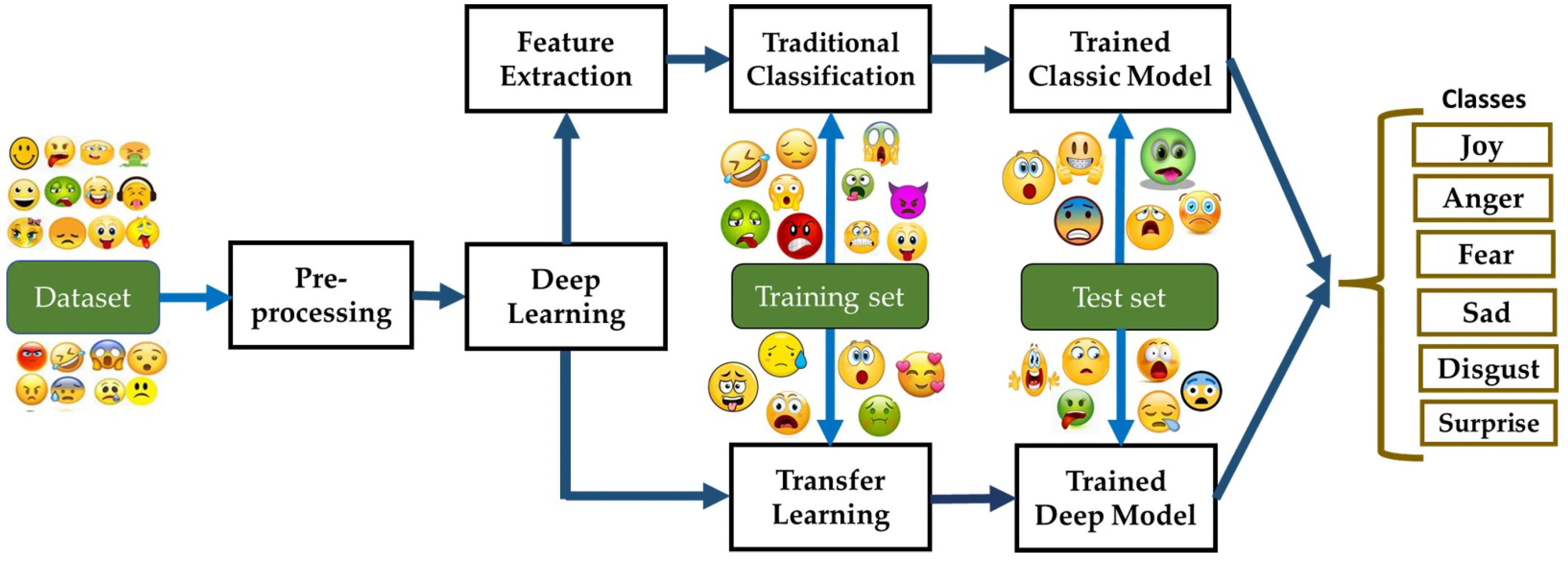

First, they trained a neural network on multi-modal data—audio clips, transcribed text, and annotated emotional content—from sarcasm-laden scenes in sitcoms like "Friends" and "The Big Bang Theory" obtained from the MUStARD database.

They next developed an algorithm to map emotional cues from the audio and text, and assigned appropriate emoticons based on sentiment analysis. "For instance, as the discrepancy between emotion and sentiment is defined as a cue for sarcasm, attention mechanisms can capture the irrelevance of two modalities, assigning higher weights to it," Gao explains

This made the AI more powerful at detecting sarcasm based on a multi-faceted approach, beating other tools that rely on text and voice pitch changes alone despite counting on a somewhat limited training dataset. "Different from previous approaches focusing on data fusion, our aim is based on limited data (only auditory in our case), focusing on data augmentation to boost data, we are aiming at data scarcity issues (in sarcasm dataset, or low resource language)," Gao told Decrypt.

"Our methodology leverages the strengths of each modality: emotion recognition algorithms analyze the audio data for affective cues, while sentiment analysis processes the text,” the research paper explains. “The integration of these modalities aims to compensate for limitations in pitch perception."

The AI detected sarcasm in new sitcom scenes with around 75% accuracy. The researchers admit, however, humans still edge out machines in this particular perception test.

"When you start studying sarcasm, you become hyper-aware of the extent to which we use it as part of our normal mode of communication," Matt Coler, another researcher working on the project, told The Guardian. "We have to speak to our devices in a very literal way, as if we're talking to a robot, because we are.

"It doesn't have to be this way," he added.

Gao sheds with Decrypt more light on the researchers’ motivation: "The research aims to develop sarcasm detection algorithms that can be used to assist people with neurodegenerative diseases (e.g., semantic dementia, autism), because of impairments in auditory function, emotion processing or perspective taking, they suffer from sarcasm perception."

"While sarcasm has social function, detecting sarcasm in conversations helps them with social integration while mitigates social isolation.” she told Decrypt, “the whole architecture can be easily applied to detecting irony, hate speech, abuse etc. using relevant application-specific data."

This sarcasm detection tool is only the latest attempt to find the hidden sentiment or meaning in human language, and is becoming increasingly important as the explosive adoption of AI chatbots means millions of daily conversations with large language models (LLM).

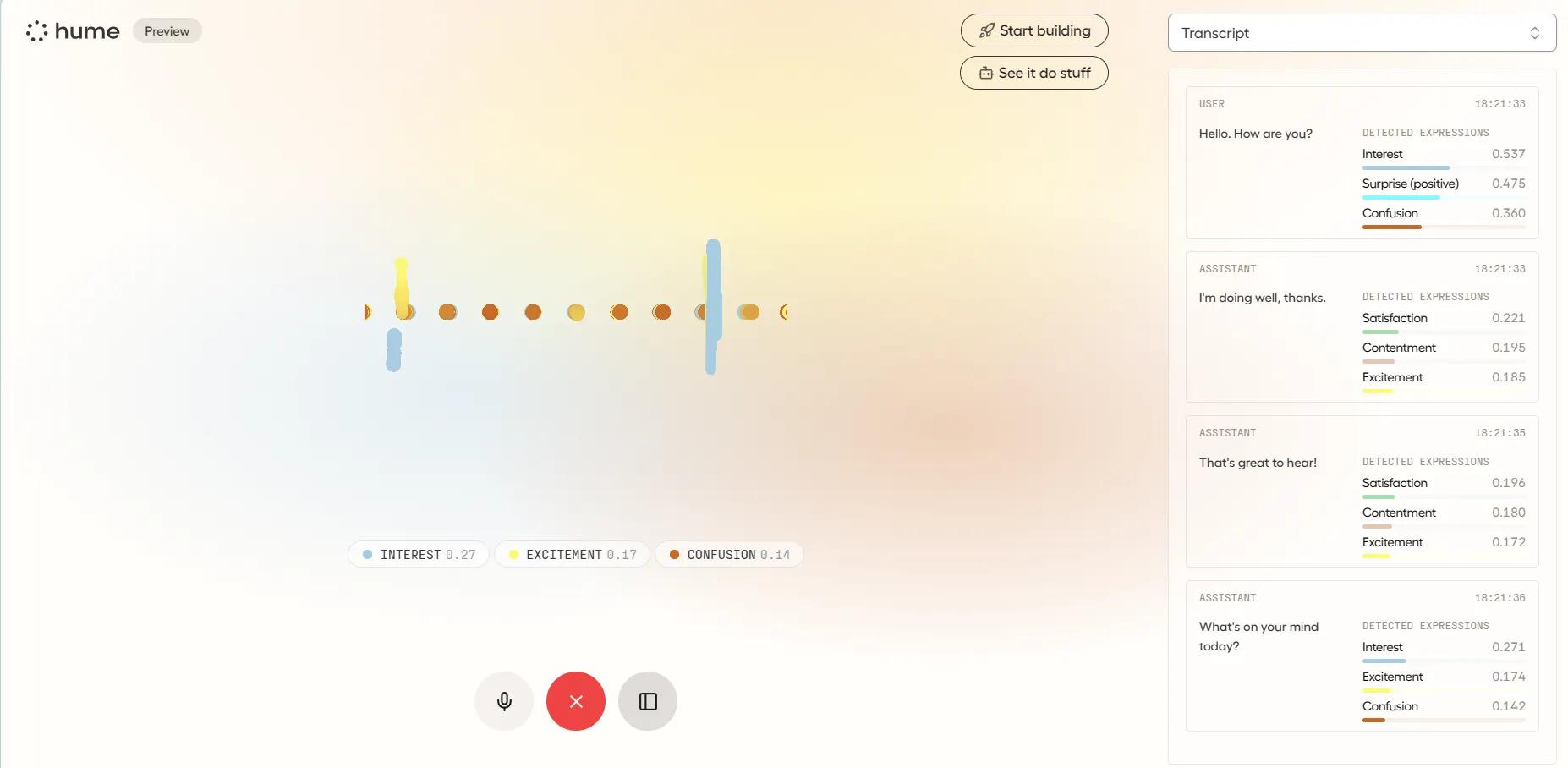

For example, Hume AI says it developed an AI system to detect different inflections in the voice to identify a range of emotions, not just a change in tone or mood. Hume is designed to understand and respond to human emotions in a more nuanced and empathetic way, making AI interactions more natural and engaging, the company explains.

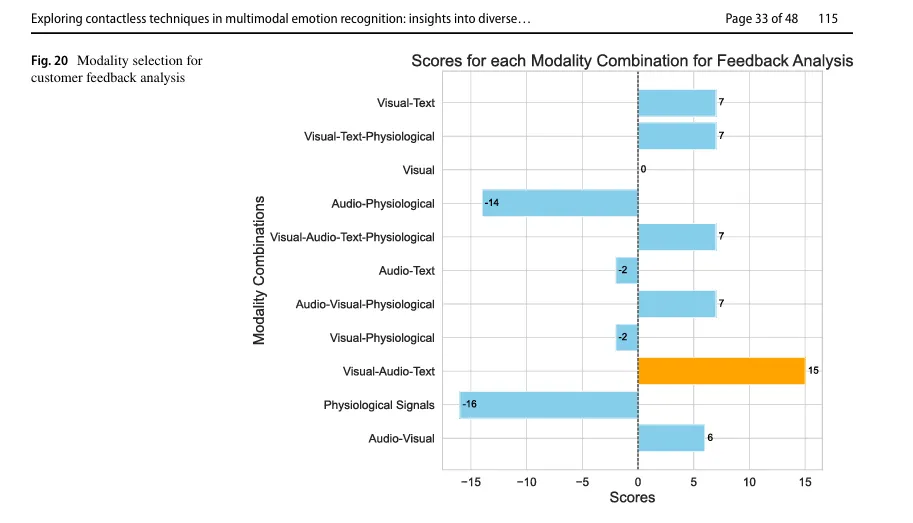

Meanwhile, a team from the Haaga-Helia University of Applied Sciences and the University of Oulu in Finland published a research paper last month exploring contactless techniques in multimodal emotion recognition.

The team similarly found that the most accurate way to analyze emotions is by merging visual, audio, and text cues together. However, they emphasized that this type of understanding is still limited by cultural variables, inherent model biases, misinterpretations, and the lack of understanding of past context.

Gao acknowledges these challenges, especially amplified by the lack of proper training data: "Different from big companies such as Openai or Apple where they can train a model with thousands of labeled data, the benchmark dataset for sarcasm (MUStARD) contains 4 hours of speech. Our approach to it is to augment more data based on what we have."

Culture is another barrier, "Our model is trained on data collected from American sitcoms, such as The Big Bang Theory, Friends... logically, it performs the best in American English,” Gao told Decrypt, “Sarcasm is highly related to language and culture, that's true, such as in Mandarin, as a tonal language, the cue for sarcasm might be related to nuances of tone change."

Other researchers have tried to analyze the use of emojis to properly detect the emotional state of the people using them. After all, a text message followed by a winking face emoji can have a very different meaning than a one without the illustration, or with a different emoji.

Gao sums up their broader vision: "In the end, we are not only trying to make a machine that detects sarcasm, sarcasm is just one use of the complex human languages, but in the long-term, we aim at making the human-machine interaction more human-oriented."

While the capability to adapt to richer nuance in conversations will help AI chatbots better answer prompts from humans, it could have far-reaching implications for society in general. In addition to detecting unwritten negative tone in language or identifying hate speech, it could also smooth interpersonal relationships, providing greater clarity in moments of confusion—especially for people communicating across languages or with neurodivergent conditions.

Edited by Ryan Ozawa. This article has been updated to include comments from Xiyuan Gao.